Software quality is a vast field, which has been the subject of many studies, standards and tools for a long time (if we think in “Software time”). To make it easily accessible from different sides, we start a dedicated series, gradually focusing on big questions such as ‘Where does it come from?’, ‘Why use it?’, ‘How to measure it?’, etc.

Our objective is not to transfer you the whole knowledge in a few posts, Matrix-style (“I know software quality!”), but to paint a big picture, by introducing and discussing its facets. And for a more involved reading, see references down below.

A case of multiple identities

So, what is software quality anyway? There are many ways to define it, such as:

- Meeting functional and non-functional requirements will ensure quality

- Quality is reached by complying to international standards

- Quality is defined by what the end user expects

- Quality is just another way to keep an eye on teams

- And of course: “I know quality is when I see it”

These are all valid definitions, depending on your point of view.

And that’s the key, isn’t it? Software quality refers to a software product, involving many actors, from design team to testers, developers, validation team, support, but also end user, management, and we’re not forgetting the quality team itself.

Each actor has an expectation of what’s good, and how to represent it.

That is one of the pitfalls of software quality: a software product’s quality can be assessed, analyzed and viewed in very different ways, and yet all these views describe the same product. They each are important, and focusing on just one aspect and neglecting the others might lead to an overall negative perception.

So, it is no surprise that software quality is a whole, not just its parts.

A brief history of software quality

The evolution of software quality is a history of growth, not unlike ours. We start our life experience by ourselves, we then interact with others, guessing and adapting to what they need, until we learn to anticipate, plan and just become better at what we do.

Software quality in its infancy (around the 50s) was often a one-person affair, who was the designer, developer and tester all at once. And work was done until the program “just ran”. Quality meant ironing out all the bugs that popped out during development.

It was not long (by the 70s) until the objective became more ambitious: quality would be reached when all requirements were met. This means being able to cover all cases described by the end user, or a technical, functional or design analysis.

Inevitably, a strong link between quality and testing was forged: quality would be measured against tests successes, and tests would explore all features to reach the targeted quality.

As teams started to expand, so did the professions. Developers received concepts from designers and fed testers with programs to validate. In this vertical relationship each participant would to his job, hand over the result to the next. This worked well, but not very efficiently: long delays, late testing, costly corrections, and aversion to change.

This arrangement had to go the way of dinosaurs. From the 80s onward, several disruptive ideas took hold:

- Intrinsic quality by code analysis: Start using static and dynamic analysis tools on source code to assess its inner quality

- Healthier quality by early testing: Stop testing too late, bugs detected early are much less costly to fix

- Finer quality by automated testing: When properly described, low-level tests can be automated. Also, tests should not just verify the expected, but also automatically explore nooks and crannies for the combination nobody thought could happen.

- Broader quality by multiplied tests: As hardware got more powerful (you know, Moore’s Law), the computing power allowed running testing campaigns on several versions of a program on several targets. And with omni-channel experience, a function must now behave the same (and so be tested) in multiple contexts.

- Shared quality by change acceptance: Against the rigidity of old development processes, Agility made propositions to cancel the ‘tunnel effect’, connect all stakeholders and keep them engaged.

- Continuous quality by integrated approach: With DevOps, quality can become a part of the process. And with all components of the development lifecycle are accessible, the quality can expand from its code and test-centric definition to include more elements from the project.

Today, we have reached a point where software quality goes beyond conformity, it is also a maturity enabler.

The normative years

“Software is everywhere”, there are indeed very few industries today which don’t rely on a piece of software. Some even put software in their products.

And in the industrial realm, stakes are high, everything counts, and in no small amounts. This is why international organizations have worked for the past decades to produce standards dedicated to the various areas of the industrial world.

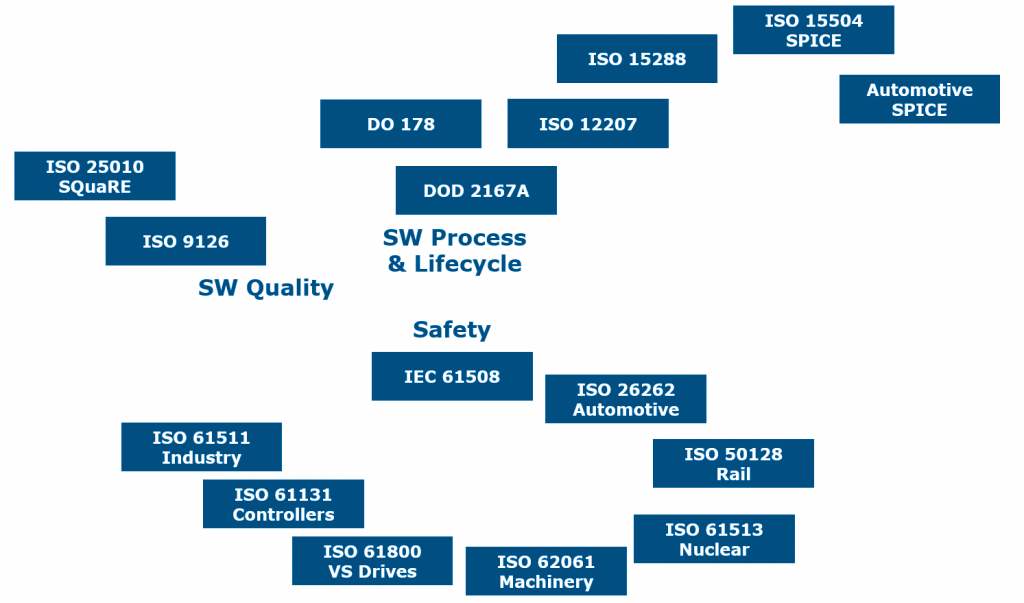

Software development has benefited from this initiative in many ways. Below is a simplified snapshot of three important areas for software:

- Software quality

- Software Process & Lifecycle: Connected to quality as it is integrating the development processes)

- Safety: Connected to quality when dealing with Safety Integrity Level (SIL)

Our objective here is not to discuss recommendations and methods for these standards, but to point at the density and volume of regulatory documents (see links below).

Such documents are important and lay the foundations for a strictly controlled and managed quality. However, they are not always straightforward to implement, and their rigorous approach can sometimes contrast with flexible and fast paced practices such as Agile and DevOps. By joining these two halves (standards and flexibility) of the “quality coin”, we can start addressing the ‘multiple identities’ issue and aim at a better software quality representation.

Under the macroscope

Let’s continue expanding the scope. Software quality can efficiently be evaluated based on source code and tests analysis. Indeed, these are natural candidates for quality assessment, as they are direct products of the development phase, they are part of the daily grind.

But as we know, code is not just related to tests, it also fulfills needs (requirements), embodies a concept (design), gets complains (bugs) or encouragements (enhancement requests). All these elements play a part in different areas of the project ecosystem and influence one another.

Taking into account these elements with their state, attributes, and links produce a network of data that not only paints a global view of the project, but also opens the door to exciting new possibilities regarding software quality:

- Has the requirements specifications phase been stabilized? Do they all have tests?

- Is the design compliant with our standard?

- Is the code complexity under control?

- What’s the test campaigns trend?

- What is the ratio of early detection for bugs?

And in addition, exploiting links can help investigate even further:

- A subset of requirements has been modified. Were the associated tests updated?

- Tests for a specific source code file are green, but bugs related to this code keep appearing. Maybe tests are not adequate?

Thanks to the systematic digitalization of project data, software quality can now encompass multiple aspects of the software production process. We have upgraded our “quality coin” to a “quality diamond”!

The tree and the forest

Reaching quality is not as straightforward as a walk in the park. It is more like an excursion in a forest. A deep, full of promises forest.

In the next posts, we are going to walk the main paths of software quality and focus on some specific areas as well. But if you want to explore on your own, here are a few links to get started.

- Starting with software quality

- A few quality-related standards

- Software standards, Normen und Modelle (in German)

2 thoughts on “Software quality: Origin story”

The story of software quality seems to be about how to better discover and communicate changeable measures of quality in an increasingly efficient feedback loop within the creative process.

Thank you for your comment: I agree, software quality is a moving and changing target: not only do we have to adapt to evolving expectations along the project life cycle, but as development techniques and target environments multiply, we have to enrich our toolbox to imagine smarter testing.

Exciting times!