In C/C++ applications, most scalar variables are defined as ‘int’. Do these applications deal with lots of large numbers that need 32-bit integers? Not likely, most of the applications we build have “human-sized” data values in the tens, hundreds, or thousands; think of speed, distance, age, or the number of students. Even the Unix Epoch Clock hasn’t overflowed 32-bits yet (it was 0x64258290 when I just checked).

Because C was built to be fast and “close to the hardware” it makes sense that the integer types map to the underlying machine architecture. Integers that are 8, 16, 32, and 64-bits are great for speed[1], but not great for maintainability, safety, and security; and certainly not great for defining and enforcing functional ranges.

So why do we use so many int types? I think it’s mostly because of habit, we think “number” and we type int, loop index: int, array index: int, speed: int, altitude: int, it’s quick and seems harmless, right?

Well, if you’re careful it might be harmless, but experience tells us that this habit leads to security vulnerabilities related to buffer overflow (bad indexing, pointer math), signed integer overflow (undefined behavior in C/C++), and many run-of-the-mill bugs caused by out-of-range values.

Terminology

A quick time-out for terminology. I’ll use physical range to mean the values that “fit” into a particular data object, and functional range to mean the values that are appropriate for that same object. So, an object that stores automotive speed in mph, might have a physical range of 0 to 255 (unsigned char), and a functional range of 0 to 150.

What’s the problem with using int everywhere?

You’ve read this far, and might be thinking: “fine, most scalars are int’s, so what?” Well, let’s think about how the lack of explicit functional ranges affects Maintainability, Safety, and Security.

Maintainability

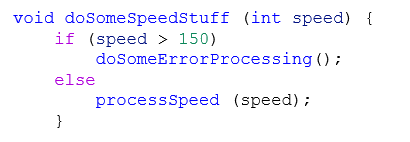

When information is hard-to-find, or duplicated, it will likely lead to inconsistencies and bugs. In most languages, functional ranges are defined in the requirements and then implicitly implemented in the logic, like this:

Obviously, if someone asks you what the maximum supported speed is, it might take you some searching to answer, rather than just reading the code.

Safety

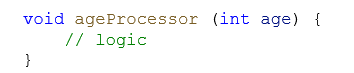

Systems with a safety requirement generally require boundary testing. A common approach is to ensure that Min, Mid, and Max values for each input are tested, and to also perform equivalence class analysis to break each functional range into sub-ranges that act in a similar way. For example, an “age” input might be broken down into 0-17: child, 18-65: adult, 66-100:senior. But if I give you the following code and ask you to test at the boundaries, what tests would you create?

Tests for INT_MIN and INT_MAX are probably not that valuable.

Security

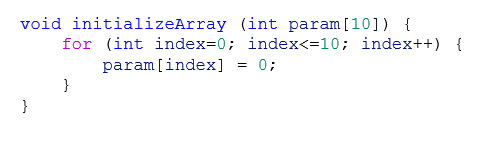

Every year, researchers identify hundreds of security vulnerabilities in software systems. Two of the more common coding errors that lead to security vulnerabilities are memory corruption from buffer overruns, and integer overflow caused by implicit casting and type promotion. For example:

You might not quickly see the overflow in this example:

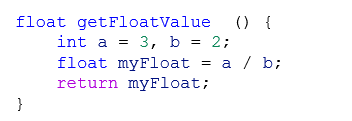

You might not realize that myFloat will be 1.0

Int problem summary

One of my core beliefs about programming is that the code should be as explicit as possible. Explicit means well thought out APIs, descriptive variable names, verbose comments, and well-defined types. Good code should formalize everything that the programmer knows about the problem, and this should include the functional ranges.

Making everything an int is like a structural engineer specifying that the supports for a building should be “steel beams,” or the foundation should be “concrete” with no additional detail.

Things that could help to solve the int problem

There are multiple approaches being used to combat the safety and security problems. Mostly we’ve been building more static analysis tools, but I’d argue that the core problem is that most languages don’t have built-in features to make writing Maintainable, Safe, and Secure applications easy; and so, we spend our time finding bugs after they’ve been introduced, rather than preventing them in the first place.

Below are some ideas for making applications more explicit.

Typedefs

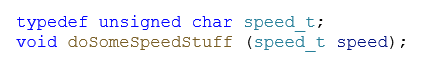

When you’re defining a function, class, or structure, you’ve got a good idea of the data ranges you expect, so why not take the time to formalize them. For example, defining doSomeSpeedStuff() this way could help quite a bit:

C/C++ allows typedefs, but they’re rarely used in a way that improves maintainability; mostly they’re used for portability, to ensure, for example, that a data object is 16 bits on all platforms by using int16_t. But why not use typedefs to impart information about your intent?

Especially if all other functions and data objects consistently used speed_t when dealing with speeds!

Contracts

Contracts[2] were first proposed in the mid 1980’s as an idea for improving function definitions so that users have enough information to use them correctly. A contract requires the specification of pre-conditions: what the component expects of its inputs, post-conditions: what the component will guarantee about its outputs, and invariants: conditions that will always be true. There’s been much discussion about including a contract facility in the C++ standard (in fact, contracts were part of a C++-20 draft, and are now being considered for C++-26). Much of the dissatisfaction with the proposals has been with the semantics and side-effects proposed.

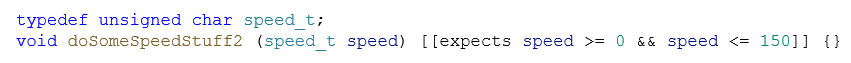

I find the pre-condition part of contracts especially interesting as it would allow each method to implement functional ranges for scalar inputs. Consider this version of doSomeSpeedStuff() with preconditions added:

This is a trivial subset of what has been proposed for C++ contacts, but you can see the power of it; a developer can quickly scan the definition and know the type and expected range for all parameters. This seems nice, but haven’t we seen a language with a feature like this before?

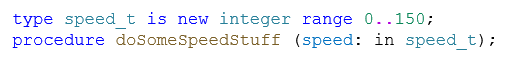

Ada

When I first used Ada in the early 1990’s, I found it a frustrating experience. The ability to create types that captured the functional ranges, was immediately attractive to me, enabling me to write:

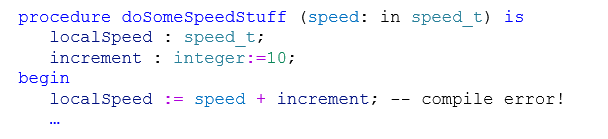

This ensured that the internal logic of this function would never “see” an out-of-range value for speed! On the other hand, this feature came with a cost, I couldn’t add an integer variable to a speed_t. What! How does this not compile? Are you kidding me?

As I got used to the language, I started to appreciate the requirement to be explicit. If I wanted to allow integers to be added to speed_t, I needed to define an operator for that, and this made me think about how each type should be used at the time I was writing the code.

This “extra” work made initial development slower but resulted in many fewer defects in general; and none of those bugs caused by one requirement saying that a message ID was 8-bits and another requirement saying it was 16-bits.

Summary

Anyone who’s been building software for a while realizes that there are no shortcuts to creating Maintainable, Safe, and Secure code. It’s a zero-sum game, you can invest the time during development or during testing and deployment. Spending time to make your code more explicit, will pay dividends over its lifecycle.

Research in this area

My research group has been thinking about this problem for the last year or so and has developed a product to help developers write more explicit code. If you think this sounds interesting, and would like to participate in the beta?

[1] Good for efficiency because minimal assembly instructions are needed to implement operations on machine-sized variables

[2] Design by contract Wikipedia page