We have been talking about monitoring and measuring software quality in several posts now: from its origins, to its benefits for the whole team. All of this is very nice, but we feel it is time to show software quality control in a real use case. Theories and concepts are important, but motivation comes from knowing that “it’s real”, and “it works”.

So, follow us into our software quality journey. We’ll be honest, and show you what went well, and wrong. In the end, we’ll discuss if the gain was worth the cost.

Measuring software quality in a real experiment

One year ago, we were working on an internal project. It had started some months prior and was growing due to increasing usage and evolving needs. Seeing that it was becoming more than a simple side project, we decided to start monitoring its quality and put all the good advice we had to the test. Using our Squore tool for monitoring and measuring software quality was a no-brainer, as we developed it just for that kind of objective.

Even for a small size project, it had to fulfill needs encountered in industrial projects:

- Source control management

- User and software requirements

- Unit and integration tests

- Development guidelines

- Deliveries

- Documentation

As with any project, there were two main phases:

- Start the engine for measuring software quality

- Start driving: set objectives, reach milestones, course correct

This post focuses on the first phase, anwering the question: What was the cost of starting that monitoring engine?

Starting the software quality measuring engine

Where are the project data?

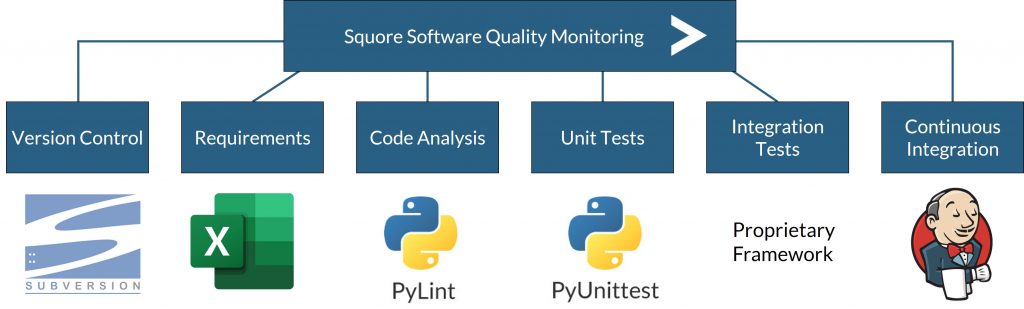

That is the usual first question. Here’s the rundown:

- The Python project code is under SVN source control

- Requirements are listed in Excel sheets

- Code analysis is done by Pylint

- Unit tests are handled by PyUnitTest

- Integration tests are conducted by a proprietary framework

- Continuous Integration is orchestrated by Jenkins + Blue Ocean

As mentioned before, these are typical parts of a development project ecosystem. We wanted to make sure they each played a part in our monitoring process.

How to feed these data to the monitoring process?

Now the real work starts. We need to tell our tool to work with the data, and we only want to tell it once.

Easy part: 5 minutes for the out-of-the-box connectors.

Using Squore makes some of this work easy. Out of the box, we have a Python static code analyzer, connectors to SVN, PyLint, PyUnitTest. So it just cost us a few minutes to invoke the appropriate connectors and set them up to point them to the right data.

Development part: 6 hours

Two data sources remain, for which we have no direct solution: the internally-defined requirements in Excel format, and the proprietary integration test framework. Fortunately, we have two ways to deal with them.

- Configure the Excel generic connector to specify where the interesting columns are, and what they mean.

- Develop a new ad-hoc connector to the integration test framework, using the Squore development toolkit

So in total, between the development, tests, and integration, it took us only a short day’s time to make sure our project data would flawlessly be part of the software quality measuring process. And we only have to spend this time once of course.

How to automate the process?

Now we have to connect the monitoring to our Jenkins Continuous Integration framework. This is done by using the “Squore agent“ to enhance our current Jenkins pipeline with the appropriate command line that will:

- Use data connectors previously mentioned

- Feed them into Squore to launch the quality rating mechanism

This process took us less than an hour, since the tool can generate a ready-made command line to use for such an occasion.

Measuring software quality: Are we there yet?

Let’s summarize. After only one full day of work:

- We have connected our data with existing standard connectors

- Our proprietary data is also connected thanks to some development

- Each time the Jenkins pipeline is triggered, Squore builds a new version of our project.

As Squore comes with a complete rating model, indicators for source code, tests, and requirements were automatically computed. Various dashboards to monitor them were available by connecting to a web GUI.

We were ready to start – what happened then?

Read more in the next blog post. Or check out the video with more details:

- How to reach continuous quality monitoring? 5:24

- Quality gates used to assess OKR 7:36

- An exciting first week of monitoring 10:15

- Benefits reached after one year 11:50

- Focus on tests, technical debt and requirements 14:03

- Focus on the review process 19:56

- Are our quality gates efficient? 22:20

- How to improve our process? 24:38

Get started with your projects

Squore product information: Analytics for software projects monitoring