Fuzz Testing or Fuzzing is establishing itself as the number one technique for highly automated software testing and is the favorite tool of security researchers and hackers alike, whether they wear a white or black hat. But … why? And how?

Let’s roll the topic up from the very beginning. Where do bugs come from, which may or may not be exploited by an attacker? From us developers. Writing code is an error-prone activity in the extreme and writing bug-free code on the first attempt is next to impossible. Thus, we test. And test. And test….

Testing

Writing tests is a time consuming and error-prone activity, which most developers consider annoying or at least less interesting than working on the actual features. On the other hand, we all know that without testing you don’t get anywhere near a properly working software product. So, we (grudgingly) write tests to make sure that our software does what we want.

That is to say, we do Functional testing.

We pass the software well-chosen input, for which we have a good idea about how the software should react. We compare the result with our expectation. If it matches, we are satisfied and go on. If it does not, we fix it until it does. We continue to write such tests until we run out of motivation or time, think we handled all “interesting” cases, the release date is due or reach some kind of quality thresh-hold (like code coverage).

What about robustness?

But what about Robustness testing?

Do you properly test all those weird edge cases and inputs no one will ever use? After all, no user will ever use a valid SQL statement as his user name, and certainly not something like: "Gotcha'); DROP TABLE USERS; --", right? And who would ever enter a floating point number into an age field anyway.

Wrong. There are people who love to just do that! Just for the hell of it. Some people throw rocks from bridges, other type in values no one will ever use into software systems. And then there are those with some altogether, darker motivation. You know, the evil hackers sitting in some shady cellar room with their black hoody pulled over their heads, crafting ransomware and stealing your IP. If your software is not robust against unexpected input, you may one day wake up and find a heap of CVE (Common Vulnerability and Exposures) alert mails flooding your boards and panicky CEOs asking pointy questions.

Okay, okay, so we have to do Robustness and Security testing as well?

You certainly should. Especially when working on a software, that may one day be employed on a cyber-physical system. Like a car. Or a surgical robot. Or a power plant.

Noticed that funny little word may right above? You are just working on an open-source json parsing library, so why should you care? Well, maybe in the future, without you even knowing, some vendor notices your library and decides it just fits perfectly for his use-case and deploys it into his new surgical robot, taking json-encoded commands from an operation station.

But this component is only ever called in a controlled way, so no need to do this. Well, that may be the case right now, but never is a really dangerous word in our rapidly changing world. How sure are you it won’t be called in a less controlled way next week? How about the hotfix for a service pack three major versions in the future written by a new colleague?

Hrm… But we don’t even have enough time to do functional testing properly! And where to even start? It is not like we even know what to expect from the system when we pass some weird values in…

The first Fuzzer

The good news: you are far from the first to face this problem.

Back in the days, in a time before my birth, developers used funny little punch cards to feed their programs with input. And those punching cards were costly and wasting money on testing sounded just as attractive back then as it does today. So, what did they do to test their programs? They just picked whatever punch cards they could find from the bin or their colleagues and fed their programs with those, to see if their programs would crash or do something weird.

That, right there, was the first Fuzzer. Soon followed by the idea of having a monkey sit in front of a keyboard (and mouse) and let him play to test programs taking user input via such means – the literal monkey test. In 1988, Barton Miller, a professor at the University of Wisconsin, coined the term Fuzz Testing. With his students, he fed programs with random input and observed that pretty much any program of that time crashed within seconds.

Simple Fuzzing

Since then, using a simple random generator to produce input to test software was always an option. Of course, using simple random will struggle to reach deep into your code. There may be roadblocks for simple fuzzing, as we will discuss later, were pure random might not be able to reach a certain branch. Simple fuzzers also struggle with well structured input, like XML.

And yet, deploying a simple fuzzer is just as simple as the way it works. Have a somewhat complex method that takes two integer parameters? Why not write a quick test case, calling the method with foobar(Rand.Next(), Rand.Next()) a few thousand times? You write it once, a matter of a minute or two, and can exercise the program for hours or days without any interaction!

There is little reason why we should not deploy simple fuzzers as a matter of principle alongside unit tests. It is simple, quick and yet quite powerful. It is an easy way to catch the low hanging fruits bugs of our software. Remember that an attacker will most certainly deploy some kind of fuzzing against our software as a first step. If he finds many bugs in this initial step, he might (rightfully) decide that our software might be a good target for an actual attack. But if our software turns out to be rather robust against his fuzzing attempts, he may switch over to another target altogether, unwilling to spend his efforts against our well-tested software!

Modern Fuzzing

As stated above, simple fuzzers often struggle to reach deep into your code. Can we do better while still keeping the process highly automated and adaptable?

Yes, we can.

A funny-named open-source project back from 2015 by the name of American Fuzzy Lop (yes, like the cute rabbit) introduced modern fuzzing to the IT world. And with a loud bang. It found (and still finds) a downright tremendous amount of bugs in software, especially in any kind of file parsers. From picture parsing and processing libraries to XML or JSON or even proprietary formats – nothing was safe.

The big players of the IT world (Google, Microsoft, Mozilla) quickly adapted many of the newly introduced concepts of AFL into their own fuzzers (like libFuzzer, OSS Fuzz, OneFuzz) and use them at scale. And all of them used other kinds of fuzzing (some simple, some sophisticated) even before AFL’s success.

Fuzzing Success Stories (and lots of numbers)

Microsoft’s SAGE Fuzzer has run for more than 1000 machine years since 2008, finding one third of the total number of found bugs during the development of Windows 7. Google says that they find 80% of their bugs with fuzzing. Mozilla has found several thousand bugs in Firefox due to fuzzing since 2004. Google is fuzzing their Chrome engine 24/7 in a cluster with more than 15.000 cores. Microsoft just put up their OneFuzz project under the MIT open-source license on github.

The latter is an Azure-based, self-hosted Fuzzing-as-a-Service solution intended to be embedded into continuous integration (CI) pipelines. It is an example of the next logical step for automated fuzz testing: tight integration, shift-left (test as early as possible) and maximized automation.

Do you have a CI pipeline? You probably do.

So why not add Fuzzing to it and find bugs early and with every commit?

How does automated fuzzing actually work?

Now, how does automated testing with fuzzing actually work? Surely it can not be as easy as just calling Rand() for all input functions and done, right?

Well, while it can be as easy as that, we do want our fuzzers do be more adjustable. Write it only once! We don’t want to write a fuzzer for each new method, program or project after all…

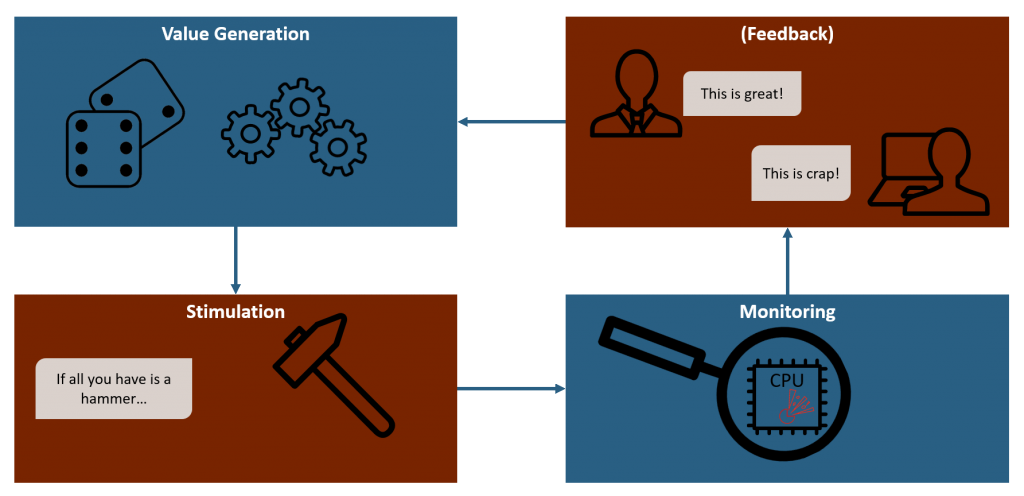

Fuzzing works with a tight loop consisting of three or four steps: Value Generation, Stimulation, Monitoring and Feedback (which is a trademark of modern fuzzers, simple fuzzers do not use a feedback loop). Each of these steps is important, if we want to create a powerful, adaptable fuzzer.

Interested in all the dark magic going on within each of these steps?

Want to know about stuff like: mutation-based fuzzing, structure-aware fuzzing, grammar-based fuzzing, corpus, fuzz targets, code instrumentation, basic path code coverage, sanitizers or fuzzing roadblocks and how to get rid of them?

Alright! My next post(s) will talk about the internals and details of Fuzzing in more detail.

1 thought on “Automated security testing with Fuzzing – The Prequel”

Thank you for the very interesting post on Fuzzing with fun facts and explained in such a nice manner!

Looking forward to more security testing related posts from you!