What’s the software quality deficit gap? It’s the time taken to evolve a product from initial release until perceived as being of good quality. It is variable depending on the number of copies of the software in circulation and how thoroughly it is used. And – what is more important – the number of iterations required to fix the bugs, plus the time taken between iterations. Therefore, a deficit exists until the desired quality can be obtained. This might be within a few quick iterations. But a large deficit will require a large number of iterations over a long period to obtain good software quality.

Quality deficits – only a problem of time-to-market?

Thus, you might be faced with a commercial dilemma. How to do thorough testing and impact the launch date of the product? Or will you do ‘enough’ testing and hit the launch date? But you know, then you’re going to have to fix problems down the road. In traditional software development methodologies, testing is conducted in the latter part of the project life cycle. Usually during the QA process once all the finished components are being assembled. In industry, this is commonly referred to as ‘integration testing’. With this practice, many development teams consider testing as an outsourced function, more than likely offshore to reduce costs.

Expensive bug-fixing

However, when testing is done this later in the process, the time taken to fix any subsequent issues is usually quite lengthy. The costs associated with fixing bugs later in the development process are extremely high. The cost ratio is considered to 5:1 for non-critical software, but 100:1 for bugs in critical software systems. It is a protracted situation because the original developer may have moved onto a different project. As a consequence, you will lose lots of time to analyze and understand the initial code base.

To overcome this issue, modern development processes like Agile and Scrum development promoted by the likes of Google have made progression as a solution to this dilemma. These incremental software development methods focus on the rapid development of useful software. However, they can fall foul of missing the critical point: Making sure the software application has been thoroughly tested.

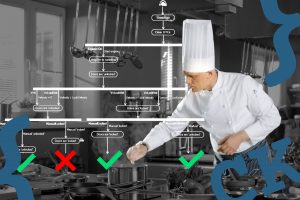

Eliminate shortcomings that prevent software quality

No matter what the methodology used, the pressure of time-to-market might remain the central point for shipping without thorough testing. But now there’s a sea-change. Development teams get increasingly measured on customer satisfaction and quality metrics. The solution for cutting the quality deficit in software development is to eliminate these seven common shortcomings :

| 1. No clear set of requirements for the product. | |

| 2. Lack of a clearly defined API for each module with tests for all boundary conditions. |

| 3. Not taking a common sense approach to testing at a logical functional level. |

| 4. Missing the use of code coverage tools to ascertain testing completeness. |

| 5. Not testing in a layered approach until the faults are found – only use unit tests when it is necessary. |

| 6. Unclearness what needs to be re-tested when a change is made. |

| 7. Not having an environment where anyone can run any test anytime. |

The solution – implement a robust software testing process

Implementing a software development process that imparts quality to every software application that your organization ships will not happen overnight, nor will it be simple. But you can’t expect customer loyalty if it relies on field usage to highlight the majority of software issues. The current trend towards IoT, connected devices and ubiquitous computing where software is present everywhere and at all times makes this even more critical. Clearly, implementing a robust software testing process is the way to prevent software quality deficits from the first version.

This post is the first in a series of posts addressing the above-mentioned shortcomings in detail, especially when using modern software development processes.